Market overviews

•

From Bits to Atoms: Physical AI

We mapped 90+ startups pioneering Physical AI, from simulation to embodied intelligence, with funding, company, and founder details. Access the list here.

At CES in January, Jensen Huang told the world the ChatGPT moment for physical AI was nearly here. Two months later, on the GTC stage in March 2026, he dropped the qualifier: "Physical AI has arrived," he said. "Every industrial company will become a robotics company."

Huang is one of the most consistent bellwethers for where AI compute demand is heading next. And the numbers back him up. Robotics and Physical AI pulled in ~$40.7B of investment in 2025, up 74% year-over-year, which is nearly 9% of all global VC, outpacing every other deep-tech sector.

Recent model breakthroughs are also snowballing the progress. GEN-1 proved scaling laws exist in robotics, where more data equals more compute equals better robots. This is the same dynamic powering the LLM race. On the other hand, Physical Intelligence's π0.5 was able to handle new environments without retraining. NVIDIA's GR00T N2, a next-generation humanoid robot foundation model unveiled at GTC, can help robots succeed at new tasks in new environments more than twice as often as leading vision-language-action models from just a year prior.

Today's Physical AI systems can follow natural language instructions, adapt to unfamiliar objects, and recover from a nudge mid-task. And with the lab-to-commercial deployment gap narrowing for the first time, every serious investor, industrial conglomerate, and frontier AI lab is paying attention.

What is Physical AI?

Physical AI refers to AI systems that perceive, reason, and act in the real world by combining intelligence (models) with embodiment (robots, machines, or devices) to execute complex tasks autonomously. Where generative AI helps you generate text and images, Physical AI is the version that actually pours your coffee, folds laundry, or drives a delivery truck safely in the real world.

At its core, Physical AI follows a fundamental Sense → Think → Act loop:

Perception: Sensors and vision systems gather information from the environment (cameras, LiDAR, tactile sensors, and more) to build an understanding of the world.

Cognition: Models and planning algorithms process the sensor data, reason about it, and decide what actions to take.

Actuation: Robotics hardware and control systems execute those decisions through physical movement, including arms, hands, legs, and full robot bodies.

While investment is flowing across all three layers, the majority of capital and attention in Physical AI today is concentrated in the cognition layer. Most innovation is happening through three key model architectures.

Model Type | Description |

|---|---|

Vision-Language Models (VLMs) | Multimodal models that combine visual understanding with natural language. They serve as the perception layer, allowing robots to interpret scenes and follow language instructions. |

Vision-Language-Action Models (VLAs) | Advanced models that extend VLMs by adding motor control. They translate vision and language inputs directly into physical actions, enabling robots to execute tasks based on natural commands. |

World Models | Predictive models that simulate how environments and objects behave over time. They help robots anticipate outcomes, plan multi-step actions, reason about physics, and recover from errors. |

There’s also a fourth, emerging approach: Robot Foundation Models, which are large-scale, pre-trained models that integrate multiple approaches (VLMs + VLAs + world models) into a unified "robot brain" for general manipulation, coordination, and autonomous decision-making.

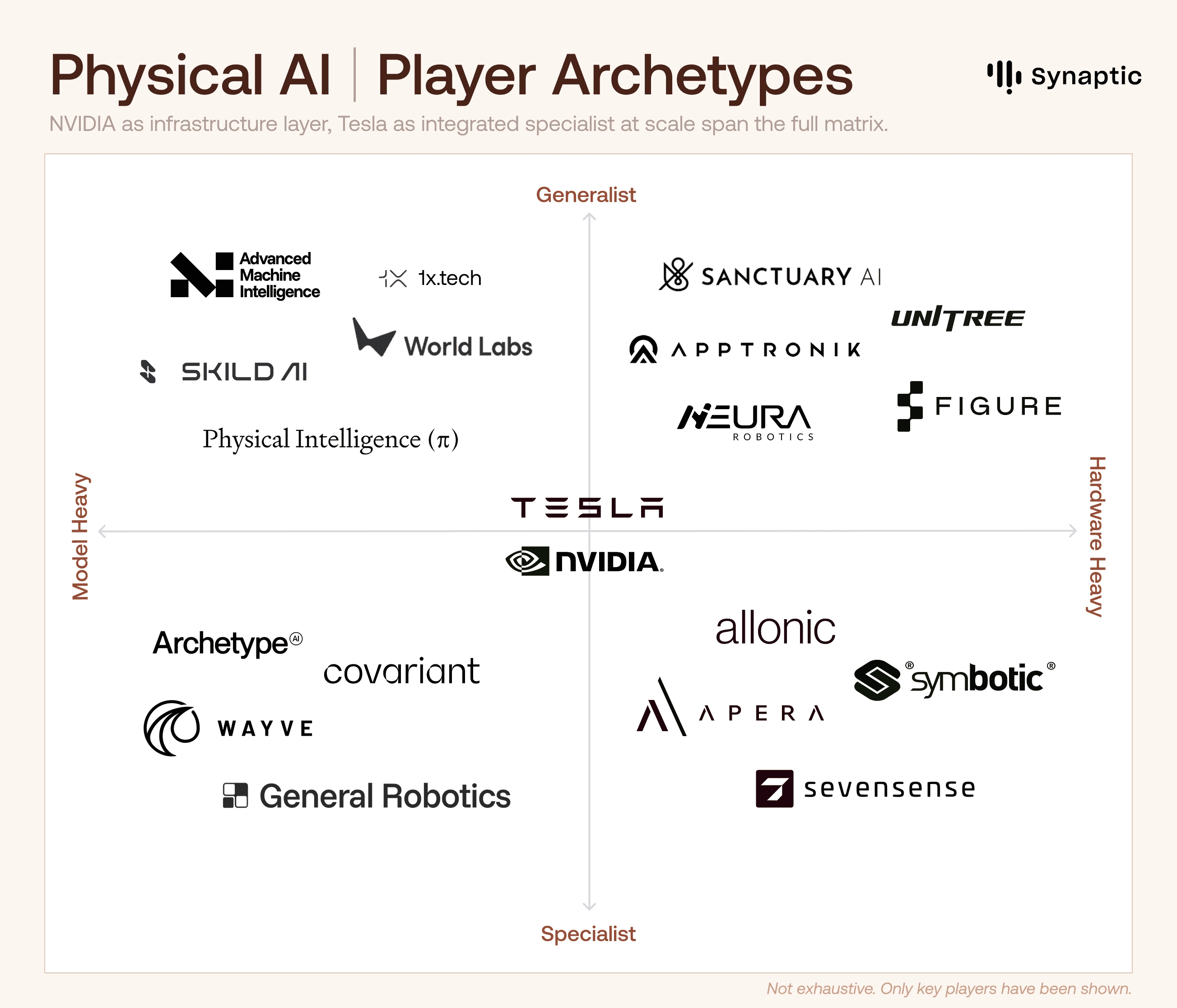

Player Archetypes: New-age startups are leading the charge, but not playing the same game

We can split the landscape into four archetypes based on what they choose to build vs. control.

AI-native / Model-Heavy Players (top-left): Focused on building foundational intelligence. They are developing advanced VLMs, VLAs, and world models, often with minimal hardware ownership, betting on the best “brain” to win.

Hardware-Heavy Players (right side): Prioritizing the design and manufacturing of physical robot bodies, from dexterous hands to full humanoids. They carry higher capital intensity and longer development cycles, but own the critical embodiment piece.

Specialist Players (bottom half): Specialists deliver deep technical breakthroughs in targeted areas rather than attempting broad general-purpose capabilities.

Full-Stack and Integrated Players: Combining strong models with integrated hardware and deployment capabilities, aiming to deliver complete, ready-to-deploy robotic solutions.

Tesla and NVIDIA span multiple quadrants. NVIDIA dominates the infrastructure layer across the entire matrix, while Tesla is attempting to build an integrated specialist at a massive scale.

While the ecosystem is still young, with different archetypes racing along distinct paths, there are two major competitions ongoing: one for intelligence (foundation models, VLAs, world models) and one for embodiment (hardware design, manufacturing, and deployment at scale).

AI-native startups are winning the intelligence layer

They’re building powerful foundation models, VLAs, and world models with minimal hardware ownership, betting on model quality for differentiation. Physical Intelligence is betting on superior intelligence to become the ultimate moat, as hardware becomes commoditized. Skild AI reportedly reached ~$30 million ARR within months of its commercial launch. World Labs, founded by Fei-Fei Li, raised $1B to pioneer 3D world models that enable robots to understand and navigate complex physical spaces from video data alone.

Hardware-First/Embodied startups are racing to perfect the physical body

They’re focusing on dexterity, durability, manufacturing scale, and real-world reliability. Figure AI ($1.9B raised, $39B valuation) is the most capitalized pure-play humanoid company. Agility Robotics is already deploying Digit in Amazon warehouses. Unitree Robotics has aggressive price-performance, while Apptronik and Sanctuary AI are both targeting enterprise manufacturing.

Big Tech is building the rails

Incumbents are constructing the underlying infrastructure instead of building end-to-end robots. Amazon acquired Covariant’s technology instead of building from scratch, while OpenAI chose to invest in Physical Intelligence. Like cloud computing, the infrastructure providers may ultimately capture more durable value than any single application layer winner.

A growing cohort of full-stack startups is also attempting to bridge intelligence with hardware Players like DYNA Robotics, Mbodi, and Generalist AI are combining strong AI models with integrated hardware and deployment platforms to deliver complete, production-ready solutions. They’re aiming to control the entire flywheel of data, intelligence, and deployment.

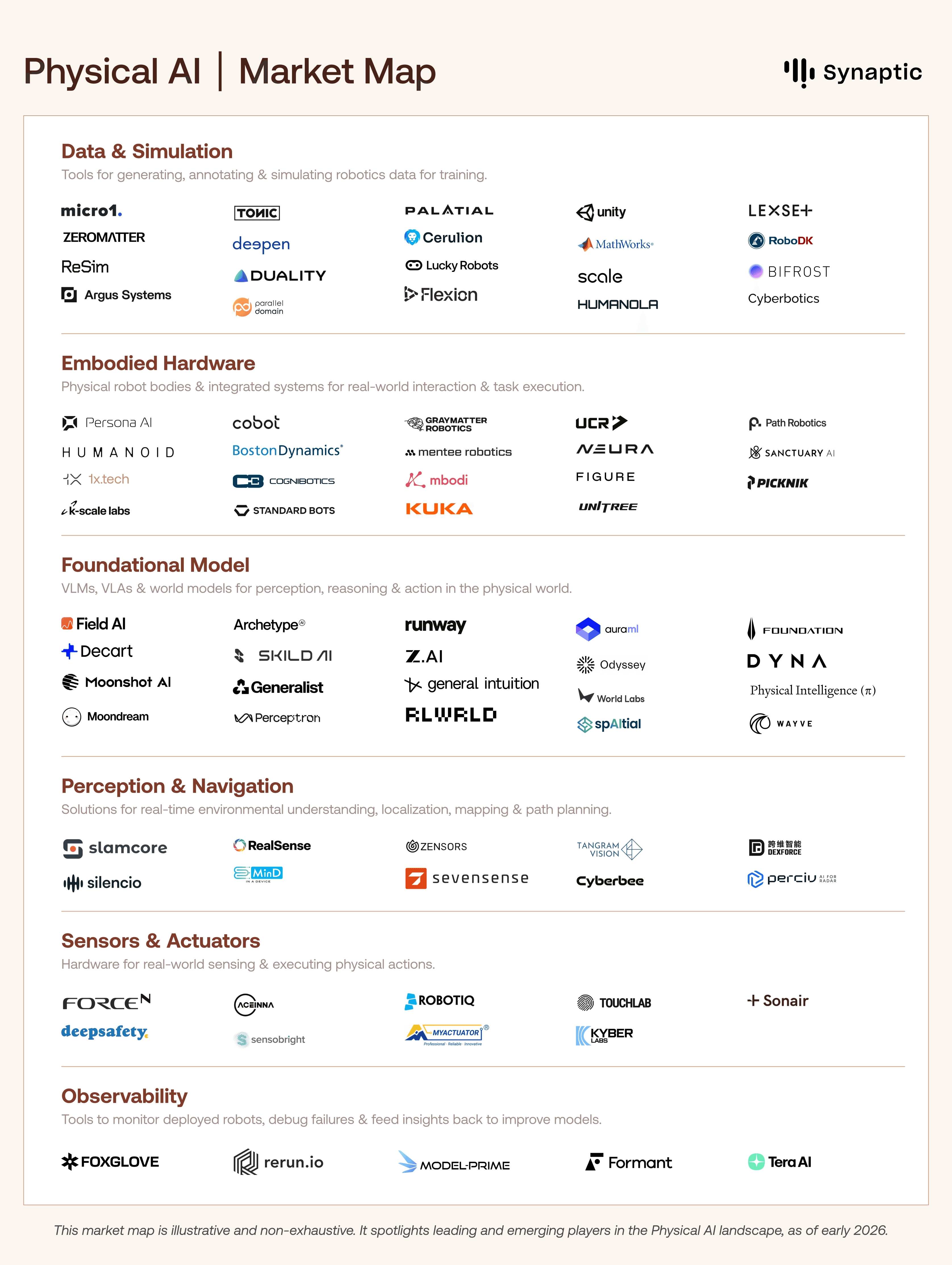

Market Map: A vibrant, competitive mix of agile startups and powerful incumbents

Across the stack, startup activity is distributed but tightly interconnected, with startups and incumbents contributing across data, models, hardware, and deployment.

Big Tech and incumbents are playing a powerful supporting role

Established players like Boston Dynamics, ABB, and FANUC bring decades of robotics expertise and industrial reach. Microsoft and OpenAI are also investing strategically rather than building everything themselves.

Foundational Models is the most crowded layer right now

This is where the largest checks are going, and where the winner-takes-most dynamics are most likely to play out. World models and vision-language-action (VLA) models saw investment jump from $1.4B in 2024 to $6.9B in 2025, a 5x increase in a single year.

Players like Skild AI and Physical Intelligence are developing universal foundation models that generalize across robot morphologies, with Skild powering scalable learning from internet-scale video data.

World Labs and AMI Labs are pioneering world models for 3D spatial reasoning, enabling robots to understand and manipulate complex environments.

Data and Simulation is the layer most investors underestimate

Models are only as good as the data they train on, and real-world robot data is scarce and expensive to collect. Companies like Scale, Zeromatter, Duality, and Parallel Domain are building the synthetic data pipelines and simulation environments that let foundation models train at scale without deploying a single physical robot.

Perception and edge execution are accelerating

Real-time sensing reduces latency and cloud dependency, enabling safe operation in unpredictable settings. Startups like RealSense (Intel), Sevensense Robotics, Zensors, and Tangram Vision are advancing multimodal perception for localization and mapping.

Audio-based and multimodal sensing players like Silencio and MinD are expanding how machines interpret surroundings. Meanwhile, Qualcomm, Apple, and NVIDIA are integrating AI accelerators into devices, signaling a push toward always-on physical intelligence in consumer and industrial hardware.

Physical AI is already powering autonomous execution, from warehouses to hospitals, optimizing real-time decision-making at scale.

NVIDIA's robotics stack powers Figure AI and 1X Technologies, while Tesla's Optimus pushes general-purpose humanoids toward factory deployment.

Wayve and Tesla are using AI models to interpret sensor data and control vehicles in real time, continuously learning from large-scale deployment.

Logistics platforms like Field AI and Covariant (formerly Generalist) enable unstructured navigation for any robot in dynamic warehouses.

In manufacturing, Bright Machines and Siemens Industrial Edge use VLA models for adaptive assembly lines.

Amazon Robotics and Agility Robotics dominate logistics autonomy, with billions deployed across warehouses.

Defense applications from Anduril's drones and Sanctuary AI enable mission-critical autonomy in contested environments.

Healthcare sees Diligent Robotics’ Moxi handling repetitive tasks and Mendaera's Focalist assisting precision surgery.

As deployments scale, observability and monitoring tools are also emerging. Platforms like Formant, Foxglove, rerun.io, ModelPrime, and Tera AI are focused on tracking robot performance, debugging failures, and feeding operational data back into training systems.

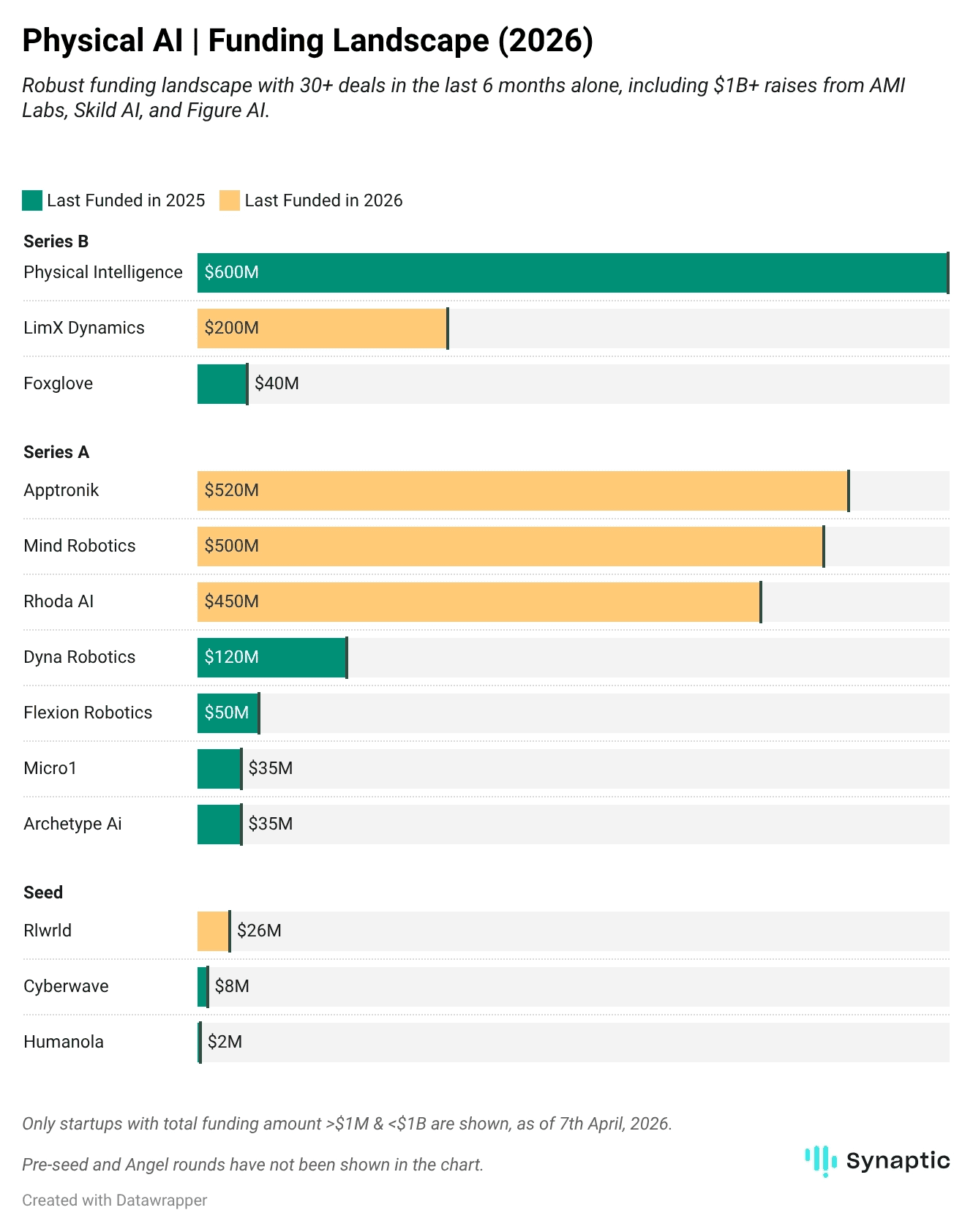

Funding Landscape: Capital concentrates in foundation models as strategic buyers race ahead

In 2025 alone, the sector saw multiple $500M–$1B+ rounds, with capital flowing disproportionately to a small number of frontier players. This momentum continues into 2026.

Physical Intelligence is in talks to raise ~$1B at a >$11B valuation, doubling its $600M round at $5.6B from November 2025 (backed by Founders Fund, Thrive Capital, Lux Capital, and CapitalG).

AMI Labs (co-founded by Yann LeCun) raised $1.03B at a $3.5B valuation, led by Bezos Expeditions, Cathay Innovation, and Greycroft.

World Labs (founded by Fei-Fei Li) closed roughly $1 billion with backing from Nvidia, AMD, Fidelity, Autodesk ($200M), and a16z.

Figure AI raised nearly $1 billion at a ~$39 billion valuation.

Skild AI raised $1.4B in Series C at a $14B valuation, led by SoftBank with participation from Nvidia Ventures, Macquarie Capital, and Jeff Bezos.

Mind Robotics (Rivian spinout) and Rhoda AI each raised $450–500 million Series A rounds.

Strategic investors are locking in early access

Khosla Ventures, Sequoia Capital, Founders Fund, CapitalG, SoftBank, and Eclipse Ventures have been among the most active investors, participating in multiple foundational rounds. Amazon, Mercedes-Benz, Autodesk, and NVIDIA's NVentures are also securing early access to capabilities they'll need to build or deploy at scale.

NVIDIA's platform bet is the safest position in the ecosystem

By open-sourcing GR00T, releasing Cosmos as infrastructure, and embedding itself in the training pipelines of Figure, 1X, Agility, and Skild, NVIDIA is building the same structural position it has in software AI: becoming the stack everyone builds on, regardless of who wins at the application layer.

M&A is picking up fast

Most incumbents are on a buying spree and not waiting for a winner to emerge.

Hugging Face acquired Pollen Robotics to pair its model ecosystem with open-source hardware.

SoftBank paid $5.4B for ABB's robotics division, stacking it onto a portfolio that already includes Skild AI and Agile Robots.

Mobileye acquired Mentee Robotics for $900M, betting that autonomous driving and humanoid robots run on the same AI stack.

Key Challenges

The sim-to-real gap is the central unsolved problem

From uncontrolled lighting and surface variation to unexpected objects and clumsy humans, the physical world is adversarial in many ways, and genuinely difficult to simulate. Robots that nail 95% of tasks in simulation typically manage only 30–50% in the real world. NVIDIA's Cosmos and Genesis AI's physics engine are attacking this with better synthetic data, but it remains unsolved.

Economics aren't proven yet

Supply chain fragility and high component costs (especially actuators, sensors, and compute hardware) keep unit economics challenging. Most humanoid robots today still cost hundreds of thousands of dollars, yet have a limited battery life of 2 to 5 hours. Industrial shifts are 2–5 hours. Manufacturing scale, safety certification timelines, and supply chain concentration (~90% of components from China) all add cost and risk that current ARR doesn't yet absorb.Data is the biggest constraint right now

Robot models require massive amounts of real-world physical interaction data, which is slow and costly to collect. This is why leading companies are heavily investing in teleoperation fleets and large-scale synthetic data generation. NVIDIA's acquisition of Gretel (~$320M) and Meta's $14.8B stake in Scale are major data infrastructure bets recently.Edge compute remains a major bottleneck

Running large multimodal models (vision, force, proprioception) with low latency on robots is extremely challenging under tight power and thermal constraints. Many systems still rely on cloud offloading, which limits their use in safety-critical or disconnected environments.

Physical AI is one of the most exciting frontiers in technology today. However, breakthroughs in intelligence and physical execution must converge for the technology to actually deliver on its promise.